The Analytics Stack: What You're Actually Measuring in eCommerce

By Diosh — Founder, AHAeCommerce | eCommerce decision intelligence for $50K–$5M GMV operators

The median DTC brand at $1M–$5M GMV runs four analytics tools that report 47 unique metrics across their combined dashboards. The same brand uses approximately 6 of those metrics to make actual operating decisions. The other 41 are noise — vanity metrics that look meaningful, change frequently, and get reported in weekly meetings, but never trigger an operator action. This is the analytics stack problem, and it's a measurement-philosophy problem before it's a tool problem. Brands routinely solve it by buying another tool, adding more dashboards, and producing more reports — which makes the original problem worse.

The fix is structural. Define the 6 metrics that drive operating decisions, build the stack to produce those 6 cleanly, and ignore everything else. The tools are downstream of the philosophy. Most operators do this in the wrong order — pick the tool first, then try to extract decision-quality data from it — and end up with the 47-metric noise problem regardless of which platform they chose.

The Default Assumption (and Why It Fails)

The default operator framing for analytics is that more measurement equals more clarity. Track everything you can, build dashboards that surface it all, and somehow the right insights will emerge from the data. This produces stacks that combine GA4, a platform-native dashboard (Shopify, BigCommerce), an attribution tool (Northbeam, Triple Whale, Rockerbox), an email analytics layer (Klaviyo), and possibly a BI tool (Looker, Mode, Lifetimely) — each producing its own metrics, each with its own definition of "revenue," each requiring its own reconciliation effort.

The framing fails for three structural reasons.

First, more metrics dilute attention. Forrester's 2024 retail analytics research found that operators with 30+ metrics on their primary dashboard made decisions on an average of 4–7 of those metrics — the same number as operators with 10-metric dashboards. The remaining 20+ metrics consumed reporting time, generated reconciliation friction across tools, and produced no incremental decisions. Adding metrics past a low ceiling (roughly 8–12 for a typical operator) produces measurable cognitive load reduction in decision quality.

Second, tool-native metrics rarely agree with each other. Shopify's "revenue" includes returns and cancellations differently than GA4's "purchase value," which differs from Triple Whale's "net revenue," which differs from a custom data warehouse's "recognized revenue." Operators spend hours each month reconciling these differences and reporting metrics that change interpretation depending on which tool produced them. The reconciliation effort produces no business outcome and consumes operating time that should go to actually using the data.

Third, vanity metrics crowd out leading indicators. Sessions, page views, average time on site, and bounce rate are easy to measure and feel meaningful, but they are lagging indicators of business performance — they tell you what already happened, not what's about to happen. Leading indicators (cart-add rate trajectory, channel-cohort repeat purchase rate, pre-purchase email engagement rate) are harder to extract from default tool dashboards and rarely make it to the metrics operators actually watch. The result is that operators react to past performance rather than anticipating future performance.

What the Decision Actually Hinges On

The 3 Operating Decisions That Matter Most

The first decision input is recognizing that eCommerce operators make exactly three categories of recurring decisions, and the analytics stack should be built around them rather than around the tool's default reports.

The first decision category is acquisition allocation: where to put marketing dollars next month. The second is conversion optimization: what to test or fix on the site. The third is retention investment: which post-purchase initiatives to fund. Every other decision is downstream of these three — pricing, inventory, product development, team — and is informed by the same underlying data signals.

The implication is that the analytics stack should produce 1–2 decision-grade metrics for each category, totaling 6 metrics that drive operating decisions, and treat everything else as supplemental context. McKinsey's 2024 retail analytics research found that brands with this structured 6-metric approach made operating decisions 2.4x faster on average than brands with 30+ metric dashboards, with no measurable degradation in decision quality and significant improvement in decision speed.

The Difference Between Leading and Lagging Indicators

The second decision input is whether each tracked metric is a leading or lagging indicator. For acquisition decisions specifically, the leading indicator is marginal CAC trajectory — blended ROAS is too aggregated to surface the crossover point where incremental spend destroys value. Lagging indicators (revenue, conversion rate, AOV, profit margin) describe what already happened. Leading indicators (cart-add rate, email engagement rate, repeat-purchase rate of recent cohorts, channel-cohort CAC trajectory) predict what's about to happen and give operators 30–90 days of warning before lagging indicators reflect the change.

Brands that build their analytics stack around lagging indicators react to changes after they've fully propagated — a revenue decline becomes visible in week 8, but the cart-add rate decline that caused it was visible in week 2 if anyone was watching. Statista's 2024 retail analytics benchmarks found that brands with at least 2 leading indicators in their primary dashboard identified emerging problems 6–10 weeks earlier than brands measuring lagging indicators only.

The Source-of-Truth Question

The third decision input is which tool is the canonical source of truth for each metric category. Operators who skip this question end up with the reconciliation problem: every metric exists in 2–4 tools with slightly different values, and someone spends time each week explaining the differences. Operators who answer it cleanly assign one tool as canonical for each category and treat the others as supplemental.

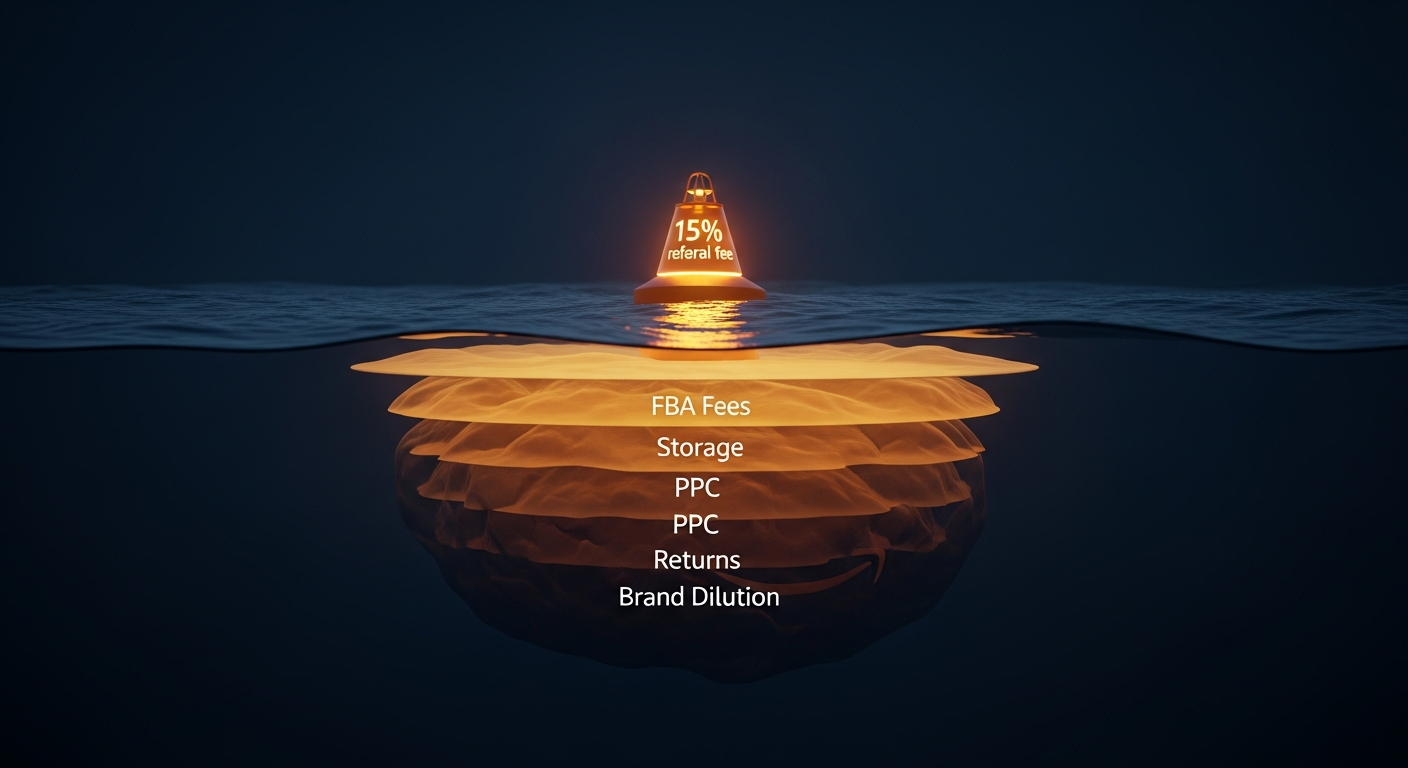

The pragmatic assignments for most DTC brands at $1M–$5M GMV: the platform itself (Shopify, BigCommerce) is canonical for orders, revenue, and gross margin. The attribution platform (Triple Whale, Northbeam, Rockerbox) is canonical for channel-level CAC. The email platform (Klaviyo) is canonical for retention metrics. GA4 is canonical for site behavior (sessions, page views, conversion funnels) but should not be treated as canonical for revenue or order data because it consistently reports 5–15% lower than platform truth due to ad-blocker and tracking-prevention impacts.

The Cost Reality

The following table compares two approaches to analytics stack cost across a 2-year window for a brand at $2M GMV.

| Cost/Effort Line | Tool-Maximalist Stack (5+ tools, 30+ metrics) | Decision-First Stack (3 tools, 6 metrics) | |---|---|---| | Tool subscriptions (annual) | $9,600–$24,000 (Triple Whale + Klaviyo + GA4 + Lifetimely + Looker) | $5,400–$12,000 (Triple Whale + Klaviyo + Shopify analytics) | | Setup and integration cost | $8,000–$18,000 | $3,000–$7,000 | | Ongoing reconciliation labor (5h/week × $50/hr) | $13,000/year | $2,600/year (1h/week) | | Dashboard maintenance | $4,000–$8,000/year | $1,500/year | | Decision speed (median time from question to answer) | 4–7 days | 1–2 days | | 2-year total cost | $53,200–$102,000 | $19,000–$36,200 | | Decision-velocity advantage | — | 2.5–3.5x |

The cost gap is significant — $34,000–$66,000 over two years for a $2M brand — but the more important difference is decision velocity. Operators using the decision-first stack make operating calls on a 1–2 day cycle. Operators using the tool-maximalist stack make the same calls on a 4–7 day cycle, because the data has to be reconciled before it can be trusted. McKinsey's 2024 analytics research identified decision velocity as a stronger predictor of operating performance than analytical depth, finding that brands with 2–3x faster decision cycles outperformed analytical-depth competitors by 12–18% on revenue growth over 24-month windows.

The arithmetic that converts this into operator-relevant terms: a brand making 4 operating decisions per month at 3-day average cycle time has 12 active decisions per month. The same brand at 1.5-day cycle time has 24 active decisions per month. Doubling decision velocity is the same as doubling effective management capacity without adding headcount.

The Trade-Off Map

Decision-First Stack (Recommended)

The benefit of a decision-first stack is operating velocity and cost discipline: $19K–$36K over two years, 1–2 day decision cycles, and a maintenance overhead that scales with the brand rather than against it. The cost is analytical depth — operators who want to ask exotic questions ("did Tuesday afternoon Meta spend perform differently from Thursday morning?") will hit limits faster than they would with a maximalist stack. For most $50K–$5M GMV brands, this trade-off is favorable: the exotic questions don't drive operating decisions; the 6 core metrics do.

Tool-Maximalist Stack

The benefit of a maximalist stack is analytical optionality. When a question arises, the data is somewhere in the stack, and a sufficiently motivated analyst can extract it. The cost is decision velocity, ongoing reconciliation labor, and the structural temptation to optimize on noise. Forrester's 2024 retail research found that maximalist stacks are appropriate for brands above $20M GMV with dedicated analyst headcount, where the marginal value of analytical depth exceeds the cost of complexity. Below that threshold, the maximalist stack consistently produces worse operating outcomes than the decision-first alternative.

Hybrid Approach (Decision-First + Periodic Deep-Dive)

The third option is to run a decision-first stack for ongoing operations and supplement with periodic deep-dive analyses (quarterly cohort studies, semi-annual attribution audits) that don't require a permanent tool subscription. This pattern works well for brands at $1M–$5M GMV that occasionally need analytical depth but cannot justify the ongoing cost of a maximalist stack. The trade-off is that deep-dive analyses are slower to execute (typically 2–4 weeks of analyst time per cycle), so they cannot replace ongoing decision-grade metrics.

When to Build Each Layer (Specific Triggers)

Trigger 1: Acquisition Layer — When Paid Spend Exceeds $10K/Month

Build the acquisition analytics layer (channel CAC, channel cohort repeat rate) when monthly paid spend exceeds $10,000 or when the brand has more than two paid channels active. Below this threshold, GA4 plus platform-native reports are sufficient and cheaper. Above it, channel-level attribution with multi-touch context (Triple Whale, Northbeam, Rockerbox) becomes the canonical source for the two acquisition-decision metrics: blended CAC trajectory and channel-cohort 90-day LTV.

Trigger 2: Conversion Layer — When AOV Has Plateaued for 90+ Days

Build the conversion analytics layer (cart-add rate by source, checkout abandonment by step) when blended AOV or conversion rate has been flat for 90+ days. The two conversion-decision metrics are cart-add rate by traffic source and checkout completion rate by step. Below the plateau threshold, conversion analytics are nice-to-have but not decision-grade; once the brand is optimizing for conversion lift, this layer becomes mandatory.

Trigger 3: Retention Layer — When the Brand Has 18+ Months of Customer Data

Build the retention analytics layer (cohort repeat purchase rate, time-to-second-purchase distribution) when the brand has at least 18 months of customer data. The two retention-decision metrics are 12-month cohort repeat purchase rate and median time-to-second-purchase. Churn signals embedded in cohort repeat purchase data are the earliest indicator that retention economics are eroding. Below 18 months of data, retention analysis produces noisy estimates with low confidence; above it, the metrics become reliable and decision-grade. Klaviyo or a custom Supabase query handles this cleanly without additional tool cost.

What Operators Get Wrong Most Often

Mistake 1: Adding Tools Before Defining Metrics

The most common stack-construction mistake is buying a tool first, then trying to extract decision-grade metrics from it. The right sequence is the reverse: identify the 6 metrics that drive operating decisions, then choose the smallest tool stack that produces those metrics cleanly. Operators who follow this sequence consistently end up with simpler, cheaper stacks that produce faster decisions. Operators who buy tools first consistently end up with stack bloat — multiple tools producing overlapping metrics, no clear source of truth, and reconciliation labor consuming operating time. This is also where technical debt accumulates in the analytics layer: each abandoned tool leaves behind tracking tags, pixel fragments, and disconnected data flows that compound over time.

Mistake 2: Treating Dashboards as Insight Machines

The second mistake is treating dashboards as a substitute for analysis. Dashboards display metrics; they don't interpret them. A dashboard that shows revenue is up 8% week-over-week tells you the number went up but not why or whether the change is durable. Operators who delegate interpretation to the dashboard make pattern-matching decisions ("revenue up = good, revenue down = bad") rather than mechanism-grounded decisions ("revenue up because of a CAC efficiency gain in Meta, which is durable because the new creative is producing it"). Building the analytical interpretation layer — usually a 30-minute weekly review of the 6 metrics by an operator who can connect them to mechanisms — is what converts dashboards from reporting infrastructure into decision infrastructure.

The Verdict

Build the analytics stack around the 6 metrics that drive operating decisions, not around the tools that produce metrics. The 6 are: blended CAC trajectory and channel-cohort 90-day LTV (acquisition), cart-add rate by source and checkout completion rate by step (conversion), 12-month cohort repeat purchase rate and median time-to-second-purchase (retention). A 3-tool stack built to produce these 6 cleanly costs $19K–$36K over two years and supports 1–2 day decision cycles. A 5+ tool stack that reports 30+ metrics costs $53K–$102K and supports 4–7 day decision cycles. This week, list every metric on your current primary dashboard, identify which ones triggered an operating decision in the last 90 days, and remove or deprioritize the rest. If fewer than 8 metrics survived the cut, you have a clear path to a faster, cheaper stack.